Don't take the first solution that comes to mind

Learn more about effective problem solving by finding out about different methods and complex problems.

Before anything else, let's clarify the scope of this article. We are not going to discuss cases where the decision makers are familiar with the environment and the number of variables is small. Here, we are going to address complex problems, stained with uncertainty, many variables interacting, and/or blank knowledge areas. For these non-simple cases, the first solutions that come to our minds are usually not optimal.

Why’s that? The science behind it includes the way we form our thoughts, absorb information, the way we link thoughts together, how we present information and how we discuss them with others as well as how we attempt solutions and improvements to a certain problem we are investigating.

We are going to briefly present some characteristics of complex systems and go through some aspects of problem-solving techniques. After that, we will present some ideas that can help us deal with some aspects of these complex systems.

Hi, I'm a complex system

The literature on complex systems is vast, but we will not dive deep into all those aspects. The strongest pillars I used for this article are [Senge], [Cook] and [Meadows].

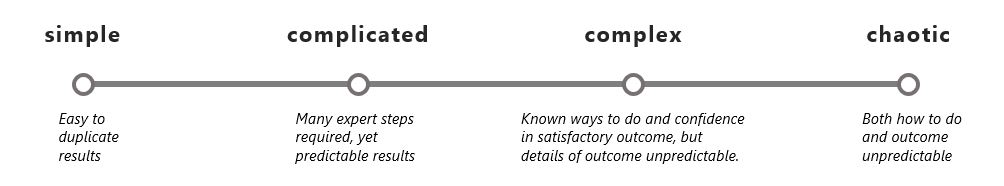

The following picture [Yoshida] illustrates some differences between understanding and predictability of a given context:

From [Cook], several aspects of complex systems are presented. These aim at explaining system failure behaviors. Some important variables over there are the number of failures (known and latent) in the system, complexity in understanding root causes, changes and human interaction with these systems.

Software development is also considered a complex activity. From [Booch], “this inherent complexity derives from four elements: the complexity of the problem domain, the difficulty of managing the development process, the flexibility possible through software, and the problems of characterizing the behavior of discrete systems”.

In this article, we will address complex problems and discuss some problems from the realm of software development.

Time is a really important variable

In some legacy systems or in a complicated piece of code, the view of many variables that interact with each other becomes blurry. Some may argue that what we’re missing is a better system documentation and hard requirements. But even with good requirements, with time, the system is likely to operate in a different way as some unforeseen situations by (usually very) creative users and scenarios that wouldn’t be present in the original requirements may happen.

Time is a critical factor not only for documentation, but also for the systems themselves. Technology evolves and upgrades happen. Parts of the system are added and removed. The people interacting with the system change (knowledge gaps broaden). All of these contribute to a bigger mismatch between documentation and the system’s behavior.

Dealing with the unknown and the unexpected

Even considering that we know exactly how the system should work, presenting a comprehensive diagram of all complex scenarios and the interaction between multiple systems components is a task far from trivial. Many documents that I`ve seen about this were oversimplified, so that only the most obvious factors (users, active components) were shown.

There is value in that, but the objective is to clarify very particular interactions, not the complete behavior of the system. And what often happens when things go wrong? These simplified diagrams miss exactly the trigger that was not considered to be an important variable in that context. Then, we would usually hear something like that: “The system should never get to that state”, “no one would ever do that” or even “the chances of this happening are too small”. Just wait.

I’m confident many of you have been debugging some code or problem that felt like this: “this is according to what is expected given these situations, but there is something different”, or “I have no clue why this is happening”. The thought process when dealing with the unknown is something absolutely amazing and we usually see more progress when we abandon some premises of how we believe things work or how they are expected to work.

Accepting that we do not have the control over all the variables in a complex system is paramount to dig deeper into the investigations and figure out details of unusual behaviors. Knowing the limits of our knowledge is really important. With that we can keep learning and improving our expertise in the surroundings of the knowledge we currently have. But how do we acquire knowledge in the first place?

Break it to understand it

Ever since early childhood, we have been learning different subjects in school that seemed rather independent. This is the scientific method. Its idea is that you break a big problem into parts small enough to be able to understand their behavior in detail. There is a downside of being an expert in a narrow area: missing the context out of the object under investigation.

It is easy to forget that that object was a part of something bigger that was inserted in another part, which, in turn, was present in a given environment. The whole context and this system are complex and we are missing tools to analyse, discuss and make iterative changes that provide good variable relations when the number of variables involved is too big.

Cause-effect is not working

The cause-effect relationship is another aspect of the scientific method. We are very familiar with the linearity it provides: if this is a condition, then that would be the effect. This can be true in local environments and on a small scale. But when we scale up and there are many cause-effect relationships happening, then some interference happens. These are often not so independent as we believe they are.

The factors that initially allowed a clear understanding of each individual if-then rule are not working independently anymore. Because of that, some mysterious things happen. We can think about how many physics lessons we had that considered friction not existent. This is a simplification that is extremely helpful to illustrate some aspects of an experiment, but we should keep that simplification in real-case scenarios we analyze in mind.

So should we just give up? Not so fast! There are some ways which we are going to describe in the following sections in order to understand the problem better and get closer to the solutions!

Dealing with complexity better

System Thinking: This technique presents a different way of approaching complex problems. In linear thinking it’s harder to see the interferences between system variables and this is where system thinking comes in handy [Senge], [Meadows]. In this approach, more variables are added to our diagrams. With this, we look for the time impact these have upon each other and upon the system. This is closely related to the feedback loop from control systems.

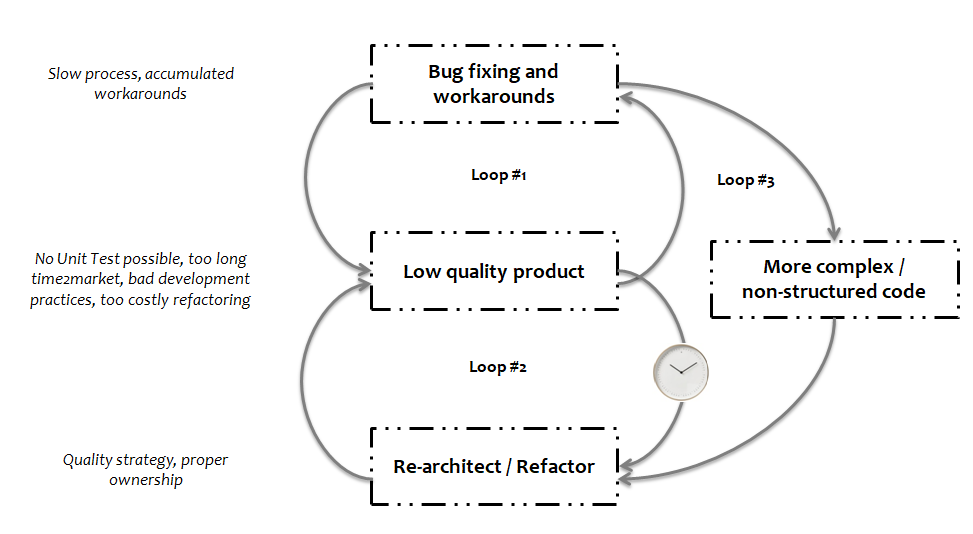

To illustrate this, let’s think about one software product that is already deployed with new features being developed. Let’s make the codebase unstructured and, on top of that, let’s create a few workaround solutions that were required to fix some defects quickly. These factors combined might lead to slower feature development, lack of ownership and a risk on long term maintenance.

Field defects arise from lack of testing, which might be caused by the non-structured code. Refactoring one problematic area of the code is something that was mentioned earlier as a solution. But it would take some time to be done, longer than fixing the most recent defect. This behavior iterates for every defect that comes in.

The loop #1 in the following picture illustrates the behavior of short-term solutions. The loop #2 illustrates the fundamental solution. The loop #3 illustrates the increasing complexity of the software and the difficulty in going to the fundamental solution after numerous workarounds are in place.

With time, the code gets more complex because of all the workarounds. And the refactoring becomes harder to be done because of the increased complexity. The refactoring would enable simpler code, more testing, leading to less defects and more efficiency in feature development.

These diagrams help present more iterations and some behavior over time. It is easier to identify important aspects of the system when we are looking at such diagrams. Combining the diagrams with discussions is even better, and that is our next hint.

Collecting different perspectives: people perceive things differently. Having different perspectives over a problem is another extremely valuable way of getting closer to a solution. I believe all of you remember at least one movie or a book that experts from very different areas got together in a venture: navigation experts, geologists, mathematicians, physicians and so on. These individuals are required because people don’t really know what’s ahead of them. But with this diverse skill set, chances of getting through the challenges and questions increase.

Retire the knife, bring the glue: the scientific approach makes a problem small enough to be understood. Now it’s time to understand the bigger context. And like in a puzzle, we might have a few known parts, but some blank areas that still need some attention.

Spectrum of all possibilities is too wide: It is not feasible to analyse all the possible variables for all possible scenarios. Because of this, when we don't get the expected results, maybe let’s do some exercise involving some additional variables that are closely related. This investigation might shed a light and we’ll move further. But do we need all variables and their impact? It depends on the accuracy and complexity of the problem you have at hand. “The best is the enemy of the good”. In complex problems the amount of variables is generally too big for any extensive combinatorial analysis.

We should keep in mind that some solutions will certainly be in areas that at first do not seem closely related to the problem at all. This thought leads us to...

Thinking outside the box: Brainstorming sessions are a very interesting tool for collaboration and gathering ideas. In these meetings we should keep our “filters” more turned off than on. After these meetings take place, by obvious reasons, it is impossible to implement all ideas / solutions presented. That’s the moment where filtering and prioritizing those ideas become more important. And this is a critical step once again! Once we have a candidate for our solution and start the change, it’s possible that we can see a benefit locally or in the short term. In the long term, on the other hand, that benefit was not so big or, what’s even worse, could have a negative impact on other parts of the system, requiring a rollback or some improvements on the top of the solution found to address the new findings.

Inspect and Adapt: this is a foundational aspect of Lean and Agile. Whether we are talking about team performance, complex problems, code craftsmanship, or software development practices, a common link between them is the fact that none of these are perfect at day zero. There is always room for improvement. The inspection might eventually tell us that there is something that could be changed with one implementation that we did and we were very proud of. Sometimes it would mean that we need some behavior changes, and we all know these are hard and take some time to get better. And individually or collectively, we will make mistakes and will have to do rounds of improvement to get to a place where a given problem has a much lower impact than other problems we might be facing.

Conclusion

This article is about solving complex problems. They might have superficial effects and at first, without too much investigation and information, it might be tempting to solve that surface problem which makes us believe that we are really fixing it. The reality is, unfortunately, that we might be creating an even bigger problem for us to solve in the future, considering that many “temporary” solutions were attempted at before.

Some tools that help us avoid those are forgetting as much as possible that we are experts in a given field, trying to analyse the facts as coldly as possible, gathering important variables and looking at how they interact in a systematic way with each other, communicating these with diagrams and listening to other people's perspectives.

I'll close this with a quote from H. L. Mencke that I really like:

For every complex problem there is an answer that is clear, simple, and wrong.

References

- Cook, Richard I. How Complex Systems Fail. Chicago, IL: Cognitive Technologies Laboratory, University of Chicago. 1998

- Senge, Peter. The Fifth Discipline: The Art and Practice of the Learning Organization: Doubleday Business, 1990

- Meadows, Donella H. Thinking in Systems: a primer: Chelsea Green Publishing, 2008

- Booch, Grady; Maksimchuk, Robert; Engle, Michael; Young, Bobbi; Conallen, Jim; Houston, Kelli. Object-Oriented Analysis and Design with Applications: Addison-Wesley Professional, 2007.

- Yoshida, Takeshi. agile-od.com. Thinking about Training Support for Change Agents, Transformation Leads & Innovation Managers. [ONLINE] Available at: "https://agile-od.com/innovation-managers-toolkit". Accessed 20 July 2020.